Revolutionizing AI and Space Tech

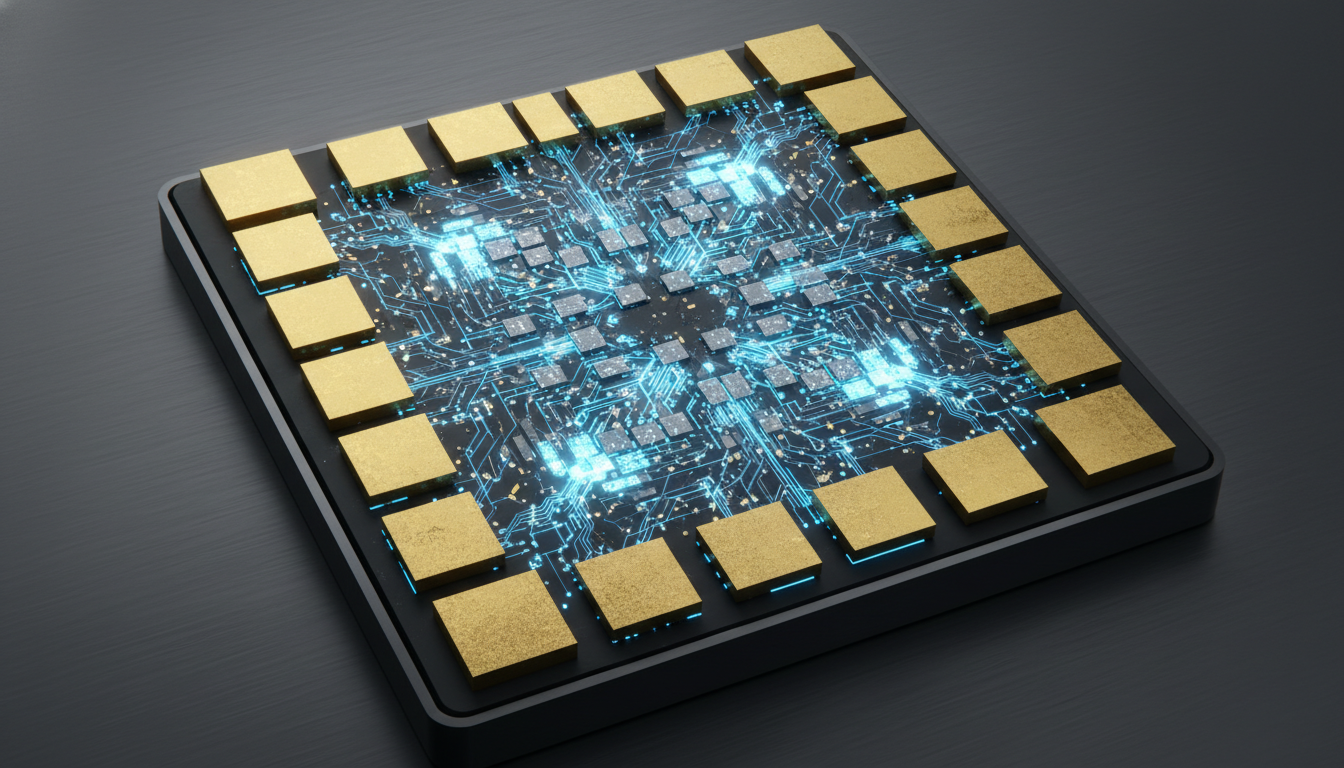

Welcome to this latest Tech News Roundup: Maia 200 AI Inference Accelerator and SpaceX IPO & Private Secondaries. Today we explore massive shifts in infrastructure and emerging technology. The Microsoft team recently unveiled the Maia 200 chip to redefine enterprise AI performance. This hardware uses a TSMC three nanometer process for extreme efficiency. It features over 140 billion transistors to handle complex models.

Because inference demands high speed data movement, Microsoft optimized every component. This unit delivers over 10 petaFLOPS in FP4 precision. Moreover, the chip integrates 216 GB of HBM3e memory to sustain massive workloads.

Furthermore, the market for space technology is heating up. Investors are closely watching SpaceX as talks of an IPO and private secondaries gain momentum. This shift highlights a growing demand for private market liquidity. Additionally, companies like OpenAI and Anthropic continue to drive hardware requirements higher.

Microsoft claims this new silicon offers 30 percent better performance per dollar. Consequently, Azure users gain a significant advantage in the cloud market. Indeed, these advances mark a new era for both space and intelligence sectors.

Deep Dive: Tech News Roundup: Maia 200 AI Inference Accelerator and SpaceX IPO & Private Secondaries

The Maia 200 represents a massive leap in hardware engineering. Engineers used the TSMC 3nm process to build this die. Because of this choice, it ensures high density and power efficiency. The chip integrates more than 140 billion transistors. Moreover, this scale allows for complex logic and massive parallel processing.

Performance metrics show how powerful this unit is. Specifically, a single chip delivers over 10 petaFLOPS in FP4 precision. It also provides more than 5 petaFLOPS in FP8. These speeds are vital for modern reasoning models. As a result, Microsoft designed this silicon for token generation at scale.

Memory often limits inference tasks more than compute does. Therefore, Microsoft included 216 GB of HBM3e memory. This memory provides nearly 7 TB per second in bandwidth. Additionally, the on die SRAM totals 272 MB. This hierarchy keeps data close to the processing units. Consequently, it reduces the pressure on the main memory system significantly.

Scale is a core part of the design. Furthermore, the architecture uses a tile based hierarchy and a Network on Chip. Four accelerators form a Fully Connected Quad per tray. Thus, this design scales to 6144 accelerators in a two tier domain. The on die NIC provides 1.4 TB per second in each direction. This results in 2.8 TB per second of bidirectional bandwidth.

Advanced Thermal Solutions and Efficiency

High performance hardware generates significant heat. However, the Maia 200 features a 750W SoC TDP. To manage this, Microsoft supports both air and liquid cooling. Indeed, this flexibility allows for integration into various Azure infrastructure setups. Ultimately, the system maintains peak performance during heavy AI workloads.

Key Features of Maia 200:

- Fabricated on the TSMC 3nm process for efficiency.

- Contains over 140 billion transistors for high logic density.

- Features 216 GB of HBM3e memory with 7 TB per second bandwidth.

- Includes 272 MB of on die SRAM for fast data access.

- Provides over 10 petaFLOPS in FP4 and 5 petaFLOPS in FP8.

- Supports 2.8 TB per second bidirectional NIC bandwidth.

- Enables scaling to 6144 accelerators for massive clusters.

- Offers air and liquid cooling options for diverse environments.

Market Impact in Tech News Roundup: Maia 200 AI Inference Accelerator and SpaceX IPO & Private Secondaries

The arrival of this silicon changes the economics of cloud computing. Microsoft claims the Maia 200 provides 30 percent better performance per dollar than previous Azure offerings. This improvement is vital for enterprise clients managing large budgets. Because organizations need to lower costs, this hardware becomes an essential tool. It enables them to run complex models at a lower cost. This evolution matters as much as the shifts in SpaceX private markets reported by TechCrunch.

Scalability is another factor that sets this hardware apart. The system can scale to 6144 accelerators within a two tier domain. This massive capacity allows for the training and inference of the largest language models. Furthermore, the architecture supports high utilization as sequence lengths grow. Therefore, companies can rely on this infrastructure for their most demanding AI projects. Moreover, this scale ensures that the hardware can handle future growth.

Maintenance and cooling are handled with enterprise grade precision. The hardware integrates directly with the Microsoft cloud control plane. This link allows for fleet wide maintenance and real time monitoring. Additionally, the unit supports both air and liquid cooling in Microsoft data centers. Such flexibility ensures that the 750W TDP does not hinder deployment. As a result, the Maia 200 fits perfectly into modern infrastructure.

Key Benefits for Enterprises:

- Lower costs with 30 percent better performance per dollar.

- Scale up to 6144 accelerators in a single cluster.

- Manage resources easily via the Azure control plane.

- Choose between air or liquid cooling for better density.

- Boost token generation speeds for reasoning models.

- Support high utilization for Microsoft 365 Copilot workloads.

Hardware Innovation: Comparing Maia 200 to Industry Standards

Microsoft created the Maia 200 to push the limits of AI performance. The silicon features a dense three nanometer fabrication process from TSMC. Moreover, the high speed memory system is much faster than previous generations. Consequently, cloud users receive more value for their operational investment. Such hardware represents a central part of the Tech News Roundup: Maia 200 AI Inference Accelerator and SpaceX IPO & Private Secondaries. Specifically, the technical specifications highlight a clear focus on efficient data movement across the semiconductor.

| Feature Category | Microsoft Maia 200 | Industry Rivals |

|---|---|---|

| Logic Process | TSMC three nanometer | TSMC four or five nanometer |

| Transistor Count | Over 140 Billion | 80 to 100 Billion |

| Memory Capacity | 216 GB HBM3e | 80 to 141 GB HBM |

| Memory Bandwidth | 7 TB per second | 3 to 5 TB per second |

| On Die SRAM | 272 MB Total | 50 to 120 MB |

| NIC Bandwidth | 2.8 TB per second | 400 to 900 GB per second |

| Scale Limit | 6144 Accelerators | 256 to 512 Units |

In addition to the raw specifications, several key factors define this hardware evolution:

- Hardware utilizes high density three nanometer logic for peak efficiency.

- Integrated memory provides seven terabytes per second of data bandwidth.

- Advanced networking allows scaling up to six thousand accelerators per cluster.

- Large on die storage reduces latency during complex reasoning tasks.

- Specialized architecture delivers thirty percent better value for cloud workloads.

Conclusion: Pioneering AI Hardware for Enterprise Growth

The introduction of the Maia 200 AI inference accelerator marks a pivotal moment in the advancement of AI hardware. Its blend of cutting edge processes, robust architecture, and scalable design sets a new standard, ensuring that enterprises can meet their AI computation needs efficiently and cost effectively. With its superior performance per dollar, the Maia 200 serves as a significant upgrade to Azure’s infrastructure, tying into broader tech movements illustrated by market shifts like the SpaceX IPO and interest in private secondaries.

As AI continues to transform industries, companies like EMP0 are crucial in helping businesses leverage such advanced technology. EMP0 stands as a leader in providing AI and automation solutions that drive growth, enhance safety, and multiply revenue for clients. By integrating these cutting-edge technologies, businesses can gain a competitive edge in the rapidly evolving tech landscape.

For those interested in harnessing the power of AI to fuel business success, explore the innovative services and platforms offered by EMP0. Connect with their pioneering solutions to guide your enterprise into the future of AI-driven innovation. Visit EMP0’s Medium or check out their n8n presence for more insights into transforming your business approach with AI.

Frequently Asked Questions (FAQs)

What makes the Maia 200 architecture unique for AI inference?

The Maia 200 architecture uses a TSMC three nanometer process and over 140 billion transistors. Moreover, it focuses on data movement through a tile based hierarchy and massive on die SRAM. This design reduces memory pressure because it delivers over 10 petaFLOPS for high speed token generation.

How does the Maia 200 compare to previous Azure hardware in terms of cost?

Microsoft claims the Maia 200 provides a 30 percent better performance per dollar ratio compared to existing Azure inference hardware. Consequently, this efficiency helps enterprises scale AI workloads while managing budgets. Therefore, it is a strategic move to optimize cloud infrastructure costs for large reasoning models.

Can the Maia 200 scale to support massive enterprise clusters?

Yes, the Maia 200 scales to 6144 accelerators in a two tier domain. Furthermore, it uses an Ethernet based fabric and a powerful NIC to provide 2.8 terabytes per second of bidirectional bandwidth. As a result, massive models can run across huge data center footprints quite effectively.

How does the Maia 200 integrate with existing Azure infrastructure?

The chip integrates directly with the Azure control plane for fleet wide maintenance and monitoring. Additionally, it supports both air and liquid cooling in Microsoft data centers. However, this flexibility allows for seamless deployment into high density environments without requiring major structural changes to cloud facilities.

What are the implications of this hardware for the broader AI market?

This hardware launch signals a shift toward custom silicon for specific AI tasks like inference. Indeed, it complements other market trends like the interest in SpaceX private secondaries. Therefore, by providing specialized tools, companies can drive faster innovation in robotics while lowering overall operational costs.