As the world becomes increasingly digital, the scaling of software applications has brought about a quantum leap in their energy demands. The advent of complex architectures—from traditional monolithic systems to modern AI-driven models—has significantly escalated the energy consumption divided among these technologies. With this growth in complexity comes the challenge of ensuring sustainability; as highlighted by the topic of Energy Consumption in Software Development, it is crucial to address the rising energy footprint of software solutions.

The demands for computational power and vast datasets are pushing the boundaries of energy consumption, presenting an urgent dilemma for developers and organizations alike. Not only must software systems be capable of handling impressive volumes of data, but they must also do so in an energy-efficient manner.

As we delve deeper into this issue, we will explore the intricate relationship between software scaling and energy efficiency, uncovering both the challenges and potential solutions that define the future of sustainable software development.

Monolithic vs. Microservices Architecture

When discussing software architecture, monolithic and microservices architecture represent two distinct approaches. Each has its advantages and disadvantages, especially regarding energy consumption.

Structure

Monolithic architecture organizes a software application as a single unit. All components—user interface, database, and others—are intertwined. In contrast, microservices architecture divides applications into smaller, independent services. Each service performs a specific business function and can be developed, deployed, and scaled individually.

Flexibility

Microservices offer flexibility by allowing developers to use different technologies or programming languages for each service. Conversely, monolithic systems lack this flexibility. Changing one component usually requires reworking the entire application.

Deployment

Deploying a monolithic application is simpler because there is only one executable to manage. However, as the application grows, updates become slow and costly, raising energy use during deployment. Microservices support continuous deployment of individual services, which can lead to more efficient resource usage and potentially lower energy consumption during updates.

Scalability

Scaling monolithic architecture is challenging. It requires replicating the entire application on multiple servers, which can lead to resource underutilization. In contrast, microservices can be scaled independently. If one service has high demand, only that service is scaled, optimizing energy usage.

Energy Consumption Impacts

Research shows that microservices architectures consume about 43.79% more energy per transaction than monolithic systems. This rise is mainly due to the overhead of communication between microservices and the additional resources needed to manage each service instance. Finer granularity in microservices increases energy consumption because of deployment complexity and communication overhead.

Monolithic applications may be more energy-efficient in some cases but can struggle with resource utilization as they scale. This can lead to increased energy demands to support unnecessary components. The tight coupling in monolithic applications also makes them less adaptable to changes aimed at optimizing energy consumption.

While microservices provide flexibility and scalability, they come with an energy cost that must be managed. Effective design strategies are needed to mitigate energy inefficiencies in microservices systems and make sustainable choices that address ongoing energy demands in software architecture.

“To build a sustainable digital future, we must rethink how we design and deploy software.”

Recent findings reveal significant insights regarding energy consumption in microservices architecture, emphasizing that microservices consume approximately 43.79% more energy per transaction compared to monolithic systems. This increase can be attributed to the operational overhead required for inter-service communication, resulting in higher energy usage and network traffic.

Moreover, a meticulous study on microservice granularity has demonstrated that finer-grained microservices induce an average rise of 461 joules (13%) in energy consumption and prolong response times by around 5.2 milliseconds (14%). While microservices enhance responsiveness and flexibility in development, they also pose serious sustainability challenges due to their energy demands.

When scaling software, the complexities become prominent. Managing a plethora of independent services requires robust infrastructure and skilled teams, often leading to operational complications. Furthermore, the network-based interactions inherent in microservices can introduce latency and complicate data consistency, impacting overall performance. Effective monitoring and debugging across these distributed systems require advanced tools that can track requests among multiple services, as traditional methods may not be adequate.

In summary, while microservices offer scalability and modularity, their substantial energy costs necessitate strategic architectural design and careful resource management to mitigate the environmental impact of modern software applications.

| Architecture Type | Energy Consumption per Transaction | Example Use Case | Energy Efficiency Notes |

|---|---|---|---|

| Monolithic | Moderate | Traditional e-commerce platforms | Efficient for small applications with less inter-service communication. |

| Microservices | +43.79% | Cloud-native applications, API services | Increased overhead due to service intercommunication but allows for independent scaling. |

| AI-Driven | High | Large-scale AI models (e.g., GPT-3) | High energy demands, especially during training; substantial resource consumption. |

Sources:

Implications of AI Training on Energy Needs

The energy consumption associated with training large AI models is substantial and increasing. Training OpenAI’s GPT-3, which consists of 175 billion parameters, consumed about 1,287 megawatt-hours (MWh) of electricity, equating to the annual energy usage of approximately 120 average U.S. homes (Insights Integration). In a more recent example, training the even larger GPT-4 required over 50 gigawatt-hours (GWh) of electricity, which matches the annual consumption of around 3,600 U.S. homes (Arcee.ai).

The energy implications do not stop at training; the inference phase—where models like ChatGPT generate responses—also adds significantly to energy consumption. For example, a single query to ChatGPT can require ten times more energy than a standard Google search (Time). As the adoption of AI continues to rise, the cumulative energy demand from inference is expected to surpass that of model training.

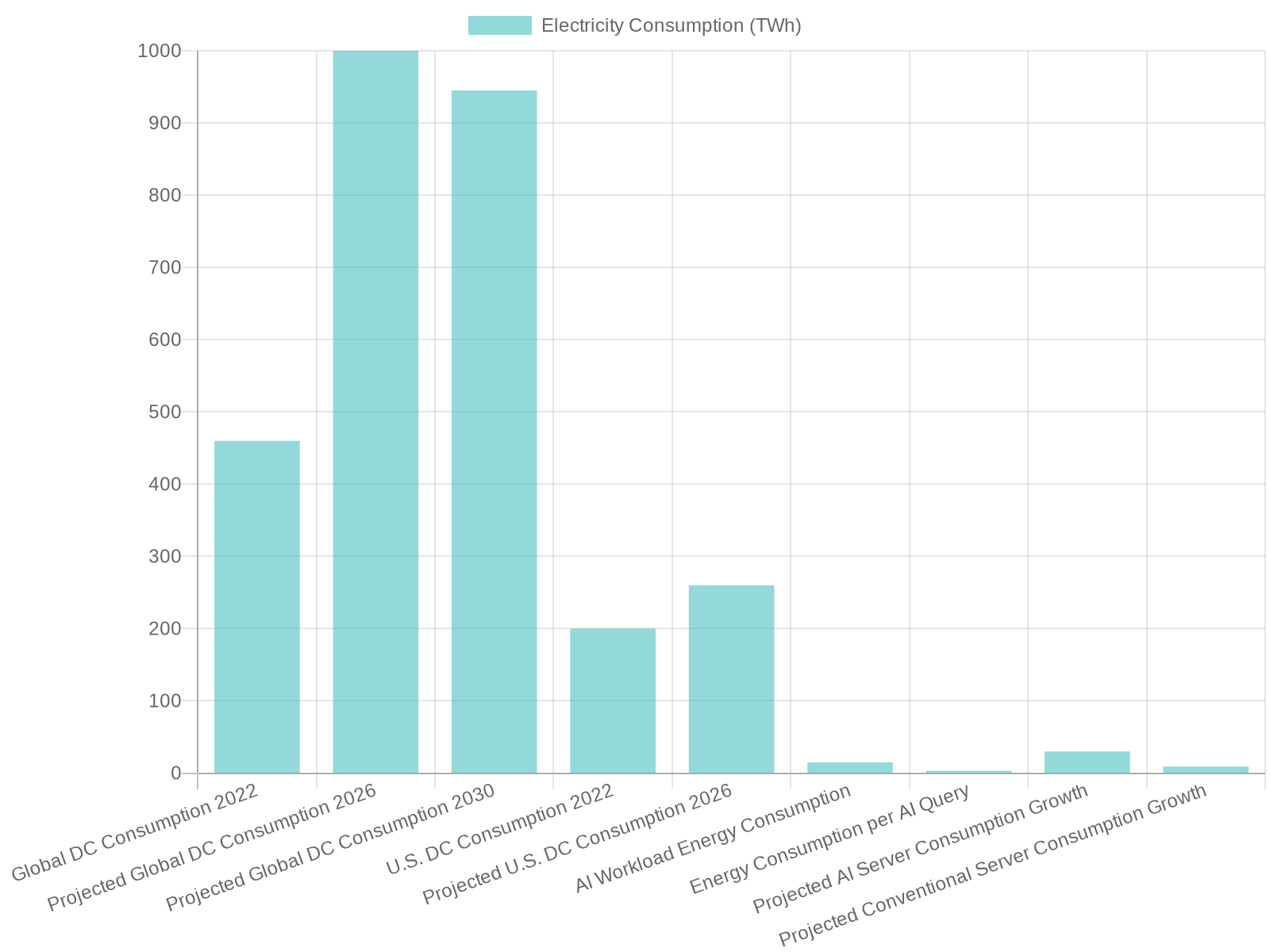

Looking at broader trends, the software industry has experienced rising energy demands, particularly due to the growth of data centers. In 2022, they consumed approximately 460 terawatt-hours (TWh) of electricity, accounting for about 1.5% of global electricity consumption (IEA). Projections suggest that by 2030, this could double to 945 TWh, representing just under 3% of global consumption (IEA). This rapid growth is being driven primarily by the increasing adoption of AI technologies.

In the United States alone, data centers consumed about 176 TWh of electricity in 2023, approximately 4.4% of the nation’s total electricity use—a significant increase from 76 TWh (1.9%) in 2018, coinciding with greater reliance on AI-specific servers (Medium).

These statistics highlight not only the immense energy demands posed by training large AI models like GPT-3 and GPT-4 but also the broader impact of AI growth on the energy consumption trends within the software industry. They stress an urgent need to develop more energy-efficient algorithms and infrastructure to mitigate the environmental impact as the reliance on AI technologies intensifies.

Energy Consumption Statistics in Software Development

The demand for energy in the realm of software and AI is surging, and this is underscored by several compelling statistics that highlight the pressing need for improved energy efficiency in our technological infrastructure. According to multiple studies, recent analyses indicate that energy consumption by AI systems is poised to climb dramatically.

- Training AI Models: Training large AI models like OpenAI’s GPT-3 consumed approximately 1,287 megawatt-hours (MWh) of electricity, which is equivalent to the annual energy consumption of about 130 average U.S. homes. This energy demand is expected to grow as evidenced by GPT-4’s training, which required more than 50 gigawatt-hours (GWh) of electricity, enough to power around 3,600 U.S. homes.

-

Electricity Consumption by Data Centers: In 2023, U.S. data centers consumed about 176 terawatt-hours (TWh) of electricity, accounting for around 4.4% of the nation’s total electricity consumption, a sizeable jump from 76 TWh (1.9%) in 2018. (Source: Medium)

Globally, data centers consumed an estimated 415 TWh in 2024, representing about 1.5% of total world electricity consumption. By 2030, this could reach approximately 945 TWh as AI demand drives consumption upwards (Source: IEA).

- Projected AI Consumption: In upcoming years, projections suggest that AI could account for nearly 49% of global data center power consumption—this is indicative of the escalating impact AI technologies are poised to have on energy resources (The Guardian).

These statistics reinforce the case for designing more energy-efficient software, particularly as the demand for AI continues to swell. The industry must prioritize energy-efficient algorithms and infrastructure to ensure sustainable growth in the future.

In conclusion, the relationship between energy consumption and software development is becoming increasingly crucial as the digital landscape evolves. The insights highlighted throughout this article reveal that the shift from monolithic architectures to microservices and AI-driven systems has significantly increased energy demands, presenting a pressing challenge for the industry. With statistics indicating microservices can consume over 43.79% more energy per transaction than monolithic systems, it is abundantly clear that developers and companies must reassess their strategies regarding software architecture and energy efficiency.

As we continue to scale software applications, prioritizing sustainable practices is vital. The implications of training large AI models and managing extensive data center operations underscore the urgent need for energy-efficient solutions. Hence, it is imperative for stakeholders to commit to designing and deploying software that not only meets the demands of scalability and performance but also upholds environmental responsibility.

The call to action is clear: developers and companies should focus on integrating energy-efficient practices into their software design and operational strategies, ensuring that as our software solutions grow, they do so sustainably.

Call to Action

As we navigate the challenges of energy consumption in software development, it is crucial for every stakeholder—be it developers, organizations, or policymakers—to take proactive steps. Here are a few actionable measures to consider:

- Adopt Energy-Efficient Practices: Review and optimize your software architecture to minimize energy usage. Consider transitioning from microservices to monolithic architectures where feasible to reduce overhead and improve efficiency.

- Invest in Sustainable Technologies: Support and invest in technologies that enhance energy efficiency, such as advanced data management systems that optimize resource usage in data centers.

- Raise Awareness: Educate teams about the impact of their choices on energy consumption. Promote a culture of sustainability within your organization to prioritize energy-efficient software solutions.

- Engage in Research: Encourage and participate in research that focuses on energy-efficient algorithms and infrastructures to contribute to a sustainable digital future.

By implementing these strategies, we can work toward a future where software development aligns with sustainability goals, ensuring a responsible approach to powering our digital world.

SEO Optimization for Sustainable Software

To enhance the SEO performance of your content related to energy consumption in software development, particularly the themes of sustainable software and green coding practices, consider integrating the following targeted strategies throughout your article. By doing so, you’ll not only optimize for relevant keywords but also advocate for the principles of sustainable development.

-

Optimize Page Load Times

Fast-loading websites improve user experience and reduce energy consumption, aligning with sustainable practices.- Example: Compress images using formats like WebP or AVIF to reduce file sizes without compromising quality.

- Benefit: Improved site performance leads to higher search engine rankings and a reduced carbon footprint.

-

Implement Sustainable UX/UI Design

Adopting energy-efficient design elements can lower power consumption and enhance user engagement.- Example: Offer a dark mode option to reduce screen brightness and conserve energy.

- Benefit: Energy savings and improved user satisfaction contribute to better SEO performance.

-

Reduce Server Requests and Data Transfers

Minimizing HTTP requests and data transfers decreases energy usage and enhances site speed.- Example: Combine CSS and JavaScript files to reduce the number of server requests.

- Benefit: Faster load times improve user experience and SEO rankings.

-

Choose Green Hosting Providers

Selecting web hosts powered by renewable energy supports sustainability and can positively impact SEO.- Example: Host your website with providers that operate using 100% renewable energy.

- Benefit: Demonstrating environmental responsibility can enhance brand reputation and attract eco-conscious users.

-

Create Evergreen and Sustainable Content

Developing content that remains relevant over time reduces the need for frequent updates and conserves resources.- Example: Write comprehensive guides on sustainable software practices that provide long-term value.

- Benefit: Evergreen content attracts consistent traffic, improving SEO performance.

-

Utilize Efficient Coding Practices

Writing clean, efficient code reduces resource consumption and enhances website performance.- Example: Optimize algorithms to minimize processing power requirements.

- Benefit: Efficient code leads to faster load times and a lower environmental impact.

-

Monitor and Optimize Energy Consumption

Regularly assessing your website’s energy usage can identify areas for improvement.- Example: Use tools like Google PageSpeed Insights to analyze performance and receive optimization suggestions.

- Benefit: Continuous optimization supports sustainability goals and enhances SEO.

By effectively including these sustainable strategies and green coding principles in your content, you can improve your online visibility for keywords like “sustainable software” and “green coding practices” while promoting environmentally friendly development practices. According to recent research, organizations that implement such practices can save significant energy costs (up to 90%) and reduce their carbon footprint, enhancing their brand’s reputation as a leader in sustainability.

References:

- Green IT: How green software development can reduce your carbon footprint – SIG

- Embracing Green Coding: Best Practices for Sustainable Software Development

- Green Coding: 5 sample codes for green software development

- Core Logic

- Green Coding: How Efficient Programming Reduces Global Electricity Demand | EarthHero