In the rapidly changing field of artificial intelligence, finding dependable ways to assess models is very important. One key method that has emerged is Contextualized AI Model Evaluation. This approach highlights the vital role of context in evaluating how well AI models perform. Standard evaluation methods often miss the subtleties of user intent and the specific characteristics of different audiences. Because of this, results can become inconsistent and may not truly represent what a model can do.

By including contextual elements in evaluations, we can greatly improve their reliability. This ensures that AI systems are effective not only in controlled settings but also in real-world situations. As discussions about AI accountability and ethics grow, the significance of contextualized evaluations becomes even clearer. This approach signifies a movement away from superficial assessments toward deeper, more meaningful judgments that consider how well models meet the needs of their intended users. Ultimately, this leads to fairer and more trustworthy AI applications.

Traditional evaluation methods for AI models have significant shortcomings, particularly in their failure to incorporate contextual factors that play a critical role in real-world applications. One glaring issue is that these methods typically focus on surface-level metrics, such as accuracy, without considering user intent or the nuances of different audiences. As stated,

“Current evaluation methods often overlook this missing context, resulting in inconsistent judgments.”

When an AI model is evaluated purely on predetermined metrics, it risks missing the complexities inherent in diverse user interactions and varying application scenarios. This lack of attention to context can have dire implications on model performance and accuracy in practice.

For example, a language model evaluated in a sterile environment might perform well statistically, yet fail to meet user needs when deployed in varied situations. The oversights can lead to biases, particularly toward dominant cultural perspectives known as WEIRD (Western, Educated, Industrialized, Rich, and Democratic). Thus, when users from different backgrounds engage with these models, the outcomes might reflect significant discrepancies. The models, although seemingly efficient, fail to resonate with or serve the specific needs of underrepresented groups.

Moreover, the absence of context can skew evaluations to favor certain attributes over others, thus distorting model rankings. An evaluation that emphasizes helpfulness may prioritize context-rich inquiries that elucidate the model’s capacity for understanding user intent. In stark contrast, traditional methods that ignore these nuances end up providing a false sense of accuracy and reliability.

To truly enhance AI model evaluation, there is a pressing need for methods that integrate contextualized evaluations. Such approaches shift the focus from simplistic measures to deeper, more meaningful criteria. This not only promotes fairness but also increases the real-world effectiveness of AI systems, allowing them to serve a broader array of users more effectively. Without this transformation, the field risks perpetuating standard practices that ultimately hinder the advancement of reliable and responsible AI.

Furthermore, the limitations of traditional evaluation methods serve as a compelling backdrop for the urgency of adopting contextualized evaluations.

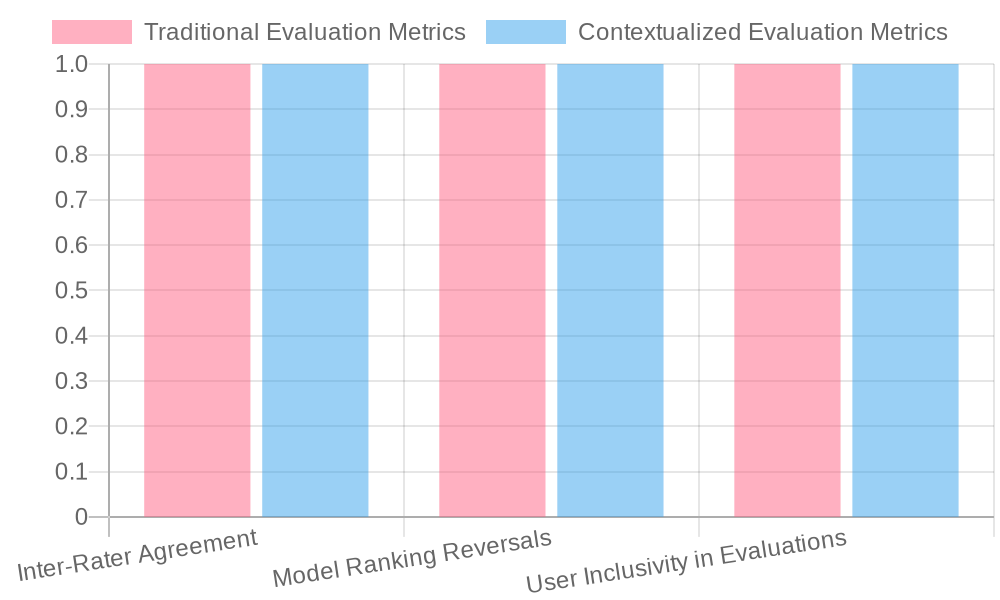

| Aspect | Traditional Evaluations | Contextualized Evaluations |

|---|---|---|

| Inter-rater Agreement | Often low, usually < 75% | Enhanced, improving by 3-10% |

| Bias Awareness | Limited; often overlooks biases | High; actively identifies biases |

| Model Ranking Reversals | Rare; rankings tend to be static | Common; context leads to shifts |

| Focus of Evaluation | Surface-level metrics, accuracy | Meaningful criteria, user intent |

| Real-world Application | Predictive but can be inaccurate | More reflective of actual performance |

| User Inclusivity | Potentially excludes diverse users | More inclusive of varied user contexts |

| Evaluation Consistency | Variable; context lacks consideration | Consistent across different scenarios |

Including context in AI model evaluative processes can dramatically reverse rankings, underlining the crucial role of user intent and contextual information. Traditional evaluation methods often overlook these aspects, prioritizing surface-level metrics such as accuracy or performance without understanding the nuances of diverse user interactions.

A notable example is presented in the research titled Contextualized Evaluations: Taking the Guesswork Out of Language Model Evaluations which demonstrates that providing context around queries significantly changes evaluation outcomes. Evaluators focused on content relevance rather than just performance metrics, which led to changes in model rankings after contextual factors were considered (arxiv.org). This comparative evaluation shows that models which may perform well in isolation (under traditional evaluations) may struggle when evaluated in real-world scenarios where user intent is prioritized.

Similarly, the study IART: Intent-aware Response Ranking with Transformers in Information-seeking Conversation Systems highlights how integrating user intent can lead to more accurate response rankings. By focusing on user’s specific inquiries and objectives, the IART model better understands context than traditional evaluation frameworks, reshaping performance metrics entirely (arxiv.org).

Moreover, the Contextual Dual Learning Algorithm with Listwise Distillation for Unbiased Learning to Rank addresses biases arising from neglecting contextual factors in model training. By incorporating context, this model provides more accurate and unbiased rankings, contrasting sharply with the biases typical of conventional assessments (arxiv.org).

These instances reinforce the idea that traditional evaluation methods often favor WEIRD contexts, potentially sidelining the user experiences of less represented demographics. Incorporating context allows for a more equitable assessment framework, enabling AI models to be evaluated not only on accuracy but also on their relevance and effectiveness across different user contexts and requirements. Ultimately, more contextualized evaluations can lead to fairer and more effective AI outcomes, ensuring that models serve broader audiences with varying needs.

Contextualized evaluations significantly enhance the fairness of AI models by addressing systemic biases that are prevalent in traditional assessment methods. These evaluations take into account various factors, such as user intent and the specific context in which the technology will be applied. A robust body of evidence supports the claim that incorporating contextual factors leads to tangible improvements in evaluation outcomes.

For instance, a notable study highlights that providing evaluators with context improved inter-rater agreement rates by an absolute 3-10%, indicating more consistent and reliable judgments. This improvement underscores the reliability gained through contextualized approaches. Furthermore, instances where context has reversed model rankings highlight the stark differences in performance metrics when user intent is prioritized.

One of the pivotal studies in this realm, titled Contextualized Evaluations: Taking the Guesswork Out of Language Model Evaluations, illustrates how incorporating context can dramatically alter perceptions of model effectiveness. The findings reveal that models evaluated without context often favor metrics aligning with WEIRD (Western, Educated, Industrialized, Rich, and Democratic) biases, potentially sidelining the user experiences of less represented demographics. By embracing a more contextualized evaluation framework, biases are illuminated and mitigated, leading to models assessed based on their effectiveness across diverse user contexts and requirements, not just traditional metrics of success.

Thus, more contextualized evaluations contribute to the creation of fairer and more equitable outcomes in AI modeling, ensuring models serve broader audiences more effectively.

Sources:

- Contextualized Evaluations: Taking the Guesswork Out of Language Model Evaluations

- Further studies and evidence are required to deepen the understanding of these impacts across different AI applications.

- Improved Inter-Rater Agreement: Contextualized evaluations have been shown to enhance inter-rater agreement by 3-10%, leading to more consistent and reliable assessments of AI models.

- Reversal of Model Rankings: Incorporating context in evaluations can reverse model rankings. This indicates that models deemed effective in traditional assessments might struggle when evaluated considering user intent and real-world contexts.

- Highlighting Biases: Traditional evaluation methods often reflect biases, particularly toward WEIRD (Western, Educated, Industrialized, Rich, and Democratic) contexts. Contextualized evaluations actively identify and mitigate these biases, promoting fairness.

- Shift in Focus: By adding context, evaluations shift focus from superficial metrics like accuracy to more meaningful criteria such as helpfulness and relevance in user interactions.

- Addressing User Intent: Contextual insights allow evaluators to better understand user intent, leading to a more accurate representation of how AI models perform in real-life scenarios.

In conclusion, the incorporation of contextualized evaluations in AI model assessments is not just a methodological upgrade; it is a fundamental shift towards ensuring reliability and fairness in AI systems. As we navigate an era where AI technologies impact numerous facets of daily life, the implications of context in our evaluative processes are profound. Traditional methods that overlook this critical element can perpetuate biases and fail to represent the diverse users they serve adequately. By embracing contextualized evaluations, we can not only enhance the inter-rater agreement and real-world relevance of our assessments but also cultivate a more inclusive AI landscape that is sensitive to the needs of all users.

We encourage you to reflect on your current evaluation practices and consider how integrating context can transform your understanding of AI performance. Stay informed about emerging methodologies and contribute to discussions around this vital aspect of AI ethics and accountability. Together, let us advocate for a future where AI model assessments are fair, thorough, and reflective of the diverse realities they are designed to serve.

To further your understanding of AI evaluation frameworks, explore the insights shared by Emergetech.io regarding various models used in this crucial area. Additionally, visit GoCodeo for a discussion on the latest frameworks designed for evaluating AI systems.

To illustrate this transformation, consider the case of a recent project conducted by the University of Maryland, where researchers applied contextualized evaluations to assess AI models used in mental health applications. They found that traditional evaluation methods led to biased results that did not accurately reflect user experiences. However, with the integration of context—such as understanding the backgrounds and needs of diverse user groups—the models showed significantly improved effectiveness and user satisfaction. This transformative approach not only increased the accuracy of the evaluations but also demonstrated the AI’s capacity to address the unique challenges faced by different users in sensitive situations.

We encourage you to reflect on your current evaluation practices and consider how integrating context can transform your understanding of AI performance. Stay informed about emerging methodologies and contribute to discussions around this vital aspect of AI ethics and accountability. Together, let us advocate for a future where AI model assessments are fair, thorough, and reflective of the diverse realities they are designed to serve.