In today’s rapidly evolving digital landscape, mastering LLM architecture is no longer just an option; it’s a necessity for any organization looking to maintain a competitive edge. As businesses increasingly harness the power of Generative AI to drive innovation, the challenge of building production-ready large language model (LLM) architectures has become a pressing concern. The AI regulation landscape and the necessity of machine learning governance have added another layer of complexity to these challenges. Achieving a robust and scalable architecture is fraught with difficulties, from understanding the multi-layered structure of prompt engineering to orchestrating model lifecycle governance effectively. The path to successful implementation is lined with hurdles such as ensuring data integrity, optimizing performance, and aligning models with business goals.

In this article, we will delve into the intricacies of LLM architecture, shedding light on the best practices that can help businesses navigate these challenges. By focusing on strategic architectural design, organizations can better position themselves for success in a world where large language models are poised to revolutionize software development and other industries.

Join us as we explore the integral components of LLM architecture, the significance of modular agents, and the critical role of prompt engineering in harnessing the true potential of Generative AI. The winners in this new landscape will undoubtedly be those who treat LLM architecture not merely as a technical requirement, but as a craft that fuels innovation.

Key Challenges in Building Production-Ready LLM Systems

Building production-ready large language model (LLM) systems presents a variety of hurdles. Organizations encounter numerous challenges in various areas, including governance, orchestration, and model lifecycle management. Below are the main challenges detailed in bullet points for clarity:

-

Governance and Compliance

- Lack of integrated governance frameworks: Many organizations do not combine comprehensive governance structures with their AI experiences.

- Unclear ownership and accountability: The potential for misinformation or bias in LLM outputs emphasizes the need to establish clear ownership.

- Legal and regulatory compliance: The rapid development of AI regulations makes following data privacy laws and ensuring thorough documentation critical.

-

Orchestration and Infrastructure

- Integration of DevOps and MLOps: Separate DevOps and MLOps practices may hinder efficiency. Unifying these can foster better collaboration.

- Infrastructure complexity: Advanced infrastructure, including optimized hardware and cloud services, is necessary for effective LLM use.

-

Model Lifecycle Management

- Data quality and bias mitigation: Ensuring high-quality training data is vital, as poor data can cause inaccuracies.

- Model drift and performance decay: Continuous monitoring is needed to counteract the performance degradation of LLMs over time.

- Versioning and experiment tracking: Complex management of models and datasets necessitates effective tracking tools for reproducibility.

-

User Trust and Transparency

- Model interpretability: Lack of clarity in how LLMs derive their conclusions can affect user trust.

- Transparency: Providing insights into model operations and output generation fosters greater trust from users.

Addressing these challenges demands a holistic approach that combines governance, operational efficiency, data integrity, and user education for successful LLM deployment in production environments.

| Layer | Purpose | Key Functionalities | Interdependencies |

|---|---|---|---|

| Prompt Layer | Serves as the interface for user interactions | Input processing, context management | Relies on Agent Layer for decision-making |

| Agent Layer | Executes tasks based on prompts | Task execution, decision-making, information retrieval | Depends on Prompt Layer for input; interacts with Orchestration Layer for task handling |

| Orchestration Layer | Manages workflows and lifecycle of agents and models | Workflow management, resource allocation, monitoring | Coordinates between Prompt and Agent Layers, ensuring smooth operation |

LLM Adoption Statistics

The adoption of Large Language Models (LLMs) within production environments has surged in recent years. As organizations strive for greater scalability and efficiency in their operations, LLMs have become integral to their technology stack. Here are notable statistics and trends highlighting the growing adoption of LLMs:

- Global Adoption: Approximately 67% of organizations have integrated generative AI systems powered by LLMs into their operations. [Hostinger]

-

Industry-Specific Trends:

- The retail and e-commerce sector leads the global LLM market with a 27.5% share, leveraging LLMs to analyze customer data and improve personalized recommendations. [Hostinger]

- In financial services, 60% of Bank of America’s clients utilize LLM-based solutions for investment and retirement planning tasks. [Hostinger]

- Enterprise Adoption: By the end of 2023, 62% of U.S. enterprises had incorporated generative AI into at least one core business process, particularly in marketing, where 78% of departments are using these technologies for content creation and optimization. [SQ Magazine]

- Multi-Model Strategies: A survey indicated that 42% of AI teams have adopted two or more LLMs in their production environments, reflecting a shift towards strategies that maximize scalability and performance. [Adam Holter]

- Market Growth: The LLM market was valued at $4.5 billion in 2023 and is projected to reach $82.1 billion by 2033, indicating a compound annual growth rate (CAGR) of 33.7%. [Market.us]

These metrics illustrate not only the rapid deployment of LLMs across industries but also their significant role in transforming traditional business processes into scalable, efficient solutions, positioning LLMs as a cornerstone of modern software architecture.

Prompt Engineering as an Interface Layer

Prompt engineering has emerged as a crucial component in the interaction between users and large language models (LLMs). It represents the primary interface layer through which these complex models can be engaged effectively. Essentially, prompt engineering involves the art and science of creating queries— or prompts—that guide LLMs in generating contextually relevant responses. This practice is not just about crafting questions; it is about understanding the underlying architecture of LLMs and leveraging that knowledge to unlock their full potential.

The significance of prompt engineering cannot be understated. In the realm of LLMs, where the model’s outputs are based on probabilistic predictions rather than deterministic rules, the way a prompt is formulated can greatly influence the quality and relevance of the generated responses. Crafting prompts that are clear, concise, and well-structured allows users to effectively channel their inquiries, aligning model behavior with specific objectives.

Moreover, prompt engineering serves as a bridge between human intent and machine understanding. By establishing a clear directive through prompts, users can minimize the noise that may arise from ambiguous or poorly constructed questions. This clarity is vital for applications in various domains, from customer service chatbots to creative writing assistants, where specificity can dramatically enhance the user experience.

As organizations strive to implement LLMs in production environments, mastering the nuances of prompt engineering becomes paramount. It is an iterative process that demands experimentation and fine-tuning. Additionally, the rapid evolution of LLM capabilities requires prompt engineers to stay informed about advancements in model architectures and functionalities to create effective prompts that leverage these new features.

In summary, prompt engineering acts as the primary interface layer for large language models, enabling effective communication while shaping the model’s outputs. Its role is integral in fostering a relationship between users and machines, underscoring the importance of thoughtful prompt creation in the successful deployment of LLMs across diverse applications. By treating prompt engineering as both an art and a technical skill, organizations can greatly enhance the performance and usability of their LLM systems, paving the way for innovative applications of generative AI.

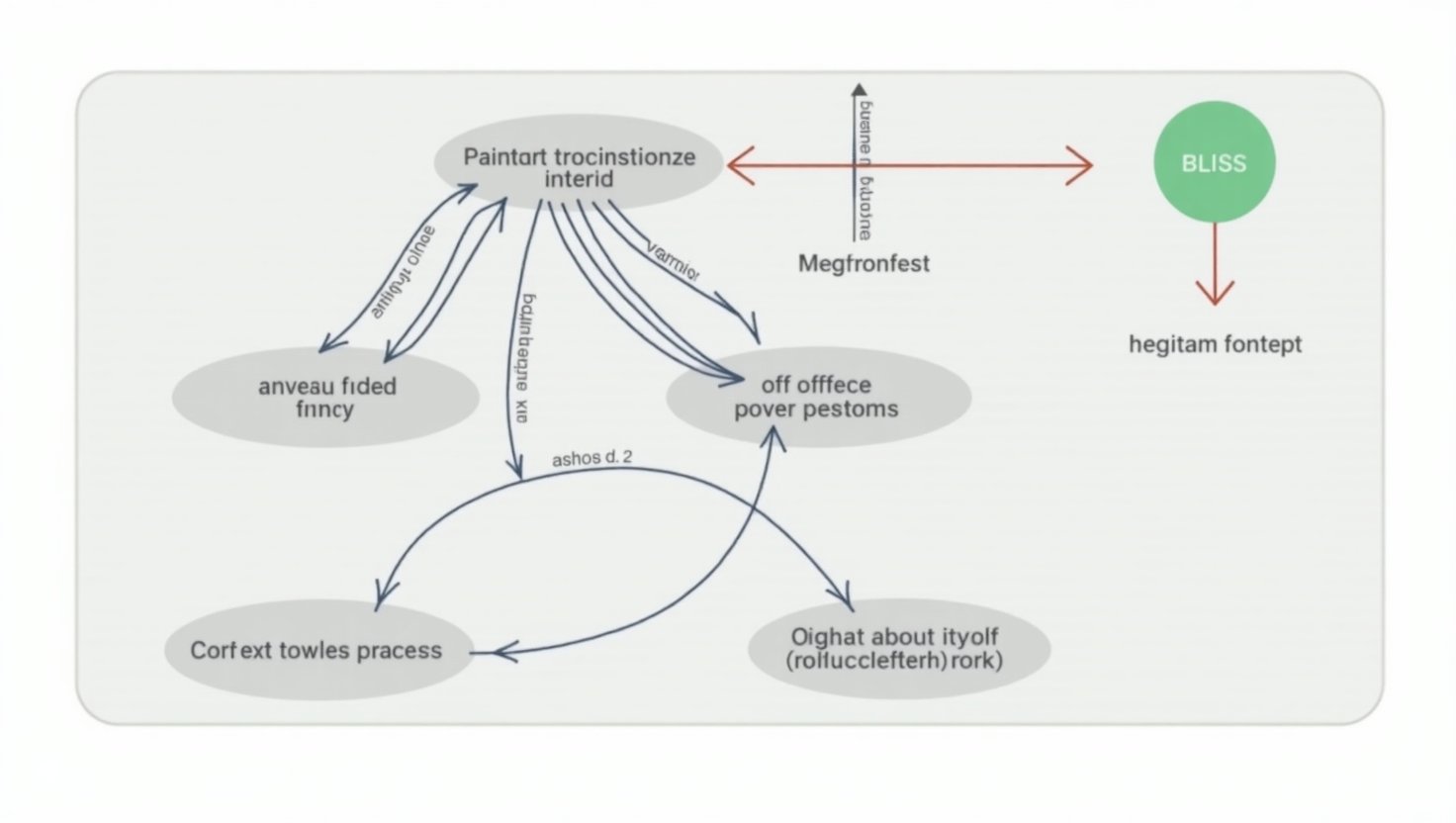

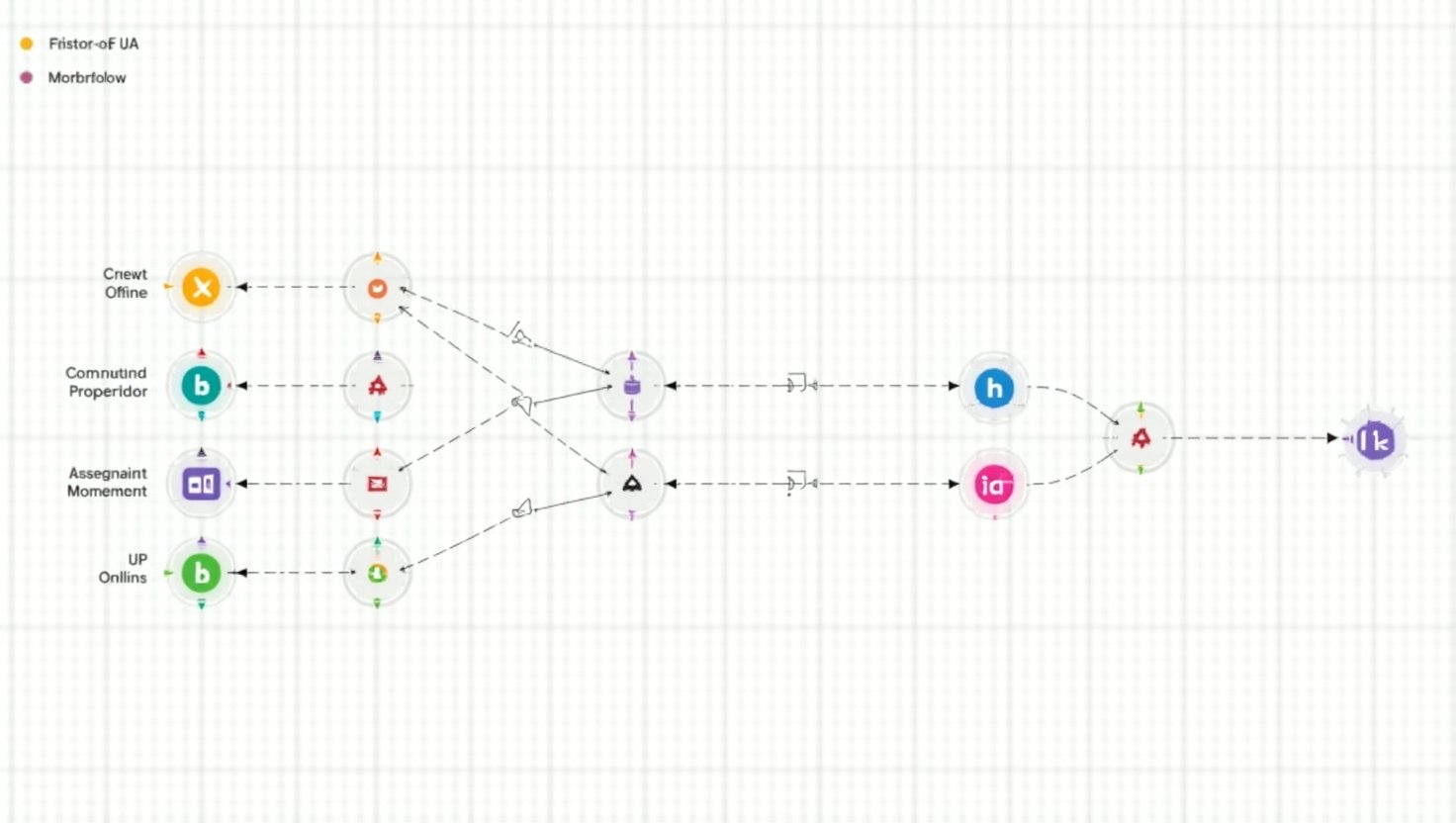

Visual Representations of the Three Layers in LLM Architecture

Prompt Layer

Agent Layer

Orchestration Layer

Expert Quotes on LLM Architectures

The significance of mastering LLM architectures has been emphasized by numerous thought leaders in AI. Their insights shed light on the nuanced craft involved in developing and deploying these powerful models:

-

Sundar Pichai, CEO of Google and Alphabet:

“AI is probably the most important thing humanity has ever worked on. I think of it as something more profound than electricity or fire.”

-

Jensen Huang, Founder and CEO of NVIDIA:

“AI is the single most powerful force of our time.”

-

Sam Altman, CEO of OpenAI:

“AI is going to change the world more than anything in the history of humanity. More than electricity.”

-

Yann LeCun, Chief AI Scientist at Meta:

“Today’s LLMs are typically trained by 30 trillion tokens… This will take any average human nearly 400,000 years to complete the reading… It means that we will never reach AGI by training LLMs from text-only data.”

-

Thomas Wolf, Co-founder and Chief Science Officer at Hugging Face:

“It’s not enough to just scrub the internet to train LLM. Quality data counts – we all are going back to this truth.”

These quotes reflect the transformative power of LLM architectures, highlighting the craft necessary to master them effectively and the substantial impact they are poised to have within the AI landscape.

AI Governance in LLM Architectures

In the rapidly advancing field of artificial intelligence, particularly with the rise of large language models (LLMs), effective governance has become paramount. AI governance refers to the framework of principles, policies, and practices that guide the deployment and management of AI technologies. When integrated into LLM architectures, robust governance frameworks facilitate better model management, ensure compliance with regulations, and ultimately lead to improved outcomes.

LLMs, with their inherent complexity and the probabilistic nature of their outputs, present unique governance challenges. One primary aspect of governance in this context is the establishment of clear protocols for model management, which involves not only overseeing the lifecycle of the models but also ensuring that they align with ethical standards and organizational goals. By implementing structured processes for model development, deployment, and retirement, organizations can mitigate risks associated with biases and inaccuracies in model outputs.

Additionally, effective orchestration across various layers of LLM architectures—prompt, agent, and orchestration layers—requires a cohesive governance strategy. This strategy must encompass workflow management and ensure smooth interactions between different model components. Such orchestration involves coordinating data flows and ensuring that outputs from one layer correctly serve as inputs for another. A well-governed LLM architecture allows organizations to harness the full potential of their models while maintaining control over the implications of their use.

Moreover, governance plays a critical role in enhancing transparency and fostering trust among users. By establishing standards for model interpretability and providing clear documentation on model behavior, organizations can help mitigate concerns surrounding the accountability of LLM outputs. As users become increasingly aware of AI’s impact, transparent practices in governance can not only democratize the benefits of AI but also cultivate a responsible AI landscape where ethical considerations are prioritized.

In conclusion, the integration of effective AI governance within LLM architectures is essential for maximizing the advantages of generative AI. It serves as a foundation for sound model management and orchestration, leading to enhanced productivity, reduced risk, and improved user trust. Organizations that actively embrace comprehensive governance frameworks are better equipped to navigate the complexities of LLMs, ultimately paving the way for their successful deployment and integration into the fabric of business operations.

Conclusion

In summary, building production-ready LLM architectures involves intricate layers of governance, orchestration, and specialized design. As we have discussed, the modern LLM architecture is composed of three critical layers: the Prompt Layer, which serves as an interface; the Agent Layer, responsible for task execution; and the Orchestration Layer, ensuring the seamless operation and lifecycle management of models.

The shift towards LLMs represents not only technological advancement but a paradigm change in how businesses operate and scale. One key takeaway from our discussion is the importance of mastering the architecture as a craft rather than viewing it solely as a technical requirement. Teams that invest the time to refine their architectural approach stand to gain a significant competitive edge in the market.

Moreover, effective prompt engineering emerges as an essential skill in leveraging the potential of LLMs. As organizations learn to craft precise prompts, they can enhance user interactions and ensure that the models align with strategic business objectives. This mastery over architecture and prompt engineering not only leads to improved accuracy and performance but also fosters user trust through transparency and governance.

Ultimately, those who embrace the nuanced complexities of LLM architectures will pave the way for innovative applications of generative AI, positioning themselves as leaders in a fast-evolving landscape that values both creativity and efficiency. The journey towards effective LLM implementation is ongoing, and organizations that prioritize continuous learning and adaptation will be best poised to capitalize on the future of AI-driven automation and intelligence.

Conclusion

In summary, building production-ready LLM architectures involves intricate layers of governance, orchestration, and specialized design. The modern LLM architecture consists of three critical layers: the Prompt Layer, serving as an interface; the Agent Layer, responsible for task execution; and the Orchestration Layer, ensuring seamless operation and lifecycle management of models.

The shift towards LLMs represents not only technological advancement but a paradigm change in how businesses operate and scale. One key takeaway is the importance of mastering the architecture as a craft rather than merely viewing it as a technical requirement. Teams that invest the time to refine their architectural approach gain a significant competitive edge in the market.

Moreover, effective prompt engineering emerges as an essential skill in leveraging the potential of LLMs. Organizations can enhance user interactions and ensure models align with their strategic objectives as they learn to craft precise prompts. This mastery leads to improved accuracy and performance while fostering user trust through transparency and governance.

Next Steps for Organizations

- Skill Development: Invest in training programs focused on prompt engineering and LLM architecture for teams to better understand the intricacies of LLMs.

- Framework Establishment: Develop clear governance frameworks that articulate roles and responsibilities related to model management and compliance.

- Iterative Testing: Regularly conduct tests on LLM outputs to refine prompts and evaluate model performance to ensure quality over time.

- Feedback Mechanisms: Implement feedback loops involving end-users to understand their experiences and adjust prompt designs accordingly.

- Continuous Learning: Stay updated with advancements in LLM technology and architectures to maintain competitive advantage and innovation capacity.

Ultimately, those who embrace the nuanced complexities of LLM architectures will pave the way for innovative applications of generative AI, positioning themselves as leaders in a fast-evolving landscape that values both creativity and efficiency. The journey towards effective LLM implementation is ongoing, and organizations that prioritize continuous learning and adaptation are best poised to capitalize on the future of AI-driven automation and intelligence.

Case Studies on Successful LLM Implementations

Several organizations have successfully implemented production-ready Large Language Models (LLMs) across various industries, yielding significant improvements in efficiency, accuracy, and customer satisfaction. Below are key case studies highlighting these implementations, along with insights and best practices derived from their experiences:

1. Financial Services: Automated Report Summarization

A large investment bank deployed an LLM to automatically summarize extensive quarterly earnings reports and market analyses. By fine-tuning a general model on financial news and reports, they achieved:

- 70% Reduction in Analyst Reading Time: The system extracted key metrics and generated concise summaries, allowing analysts to focus on deeper analysis and client advisory.

Key Insight: Tailoring LLMs to specific industry data enhances their effectiveness in automating complex tasks. Source

2. E-commerce: Personalized Product Recommendations

An online retailer integrated an LLM with their recommendation engine, analyzing customer reviews, browsing history, and purchase data to generate nuanced product suggestions. This led to:

- 15% Increase in Click-Through Rates

- 10% Boost in Sales from Recommendation-Driven Purchases

Lesson Learned: LLMs can significantly enhance personalization strategies, leading to improved customer engagement and sales. Source

3. Healthcare: Clinical Diagnosis Assistance

OpenAI collaborated with a major healthcare provider to develop an LLM for clinical diagnosis support, resulting in:

- 20% Reduction in Diagnostic Errors

- Shortened Patient Waiting Times

Best Practice: Customizing LLMs to meet specific operational needs can enhance accuracy in high-stakes environments. Source

4. Manufacturing: Knowledge Sharing Enhancement

A manufacturing company implemented an LLM-based system to retrieve information from extensive factory documentation and expert knowledge. The system efficiently answered operator queries and facilitated knowledge sharing, leading to:

- Quicker Information Retrieval

- More Efficient Issue Resolution

Insight: Integrating LLMs into knowledge management systems can streamline information access and problem-solving processes. Source

5. Hospitality: AI-Powered Booking System

Accor Group developed a generative AI-powered booking application, implementing a comprehensive testing strategy and feedback system. This approach ensured:

- Consistent and Acceptable Chatbot Responses

- Effective End-to-End System Functionality

Best Practice: Maintaining rigorous testing and feedback mechanisms is crucial for the success of AI applications in customer-facing roles. Source

6. Energy and Utilities: AI-Powered Analytics

Shell utilized LLMs to analyze operational data, predicting customer demand and uncovering gaps, which enabled:

- Better Decision-Making

- Operational Cost Savings

Lesson Learned: Leveraging LLMs for data analysis can drive efficiency and cost-effectiveness in resource management. Source

7. Food Processing: Research Analysis Automation

A food processing company implemented a fine-tuned GPT-3 model to analyze vast amounts of research papers, resulting in:

- Improved Efficiency: Quick access to research papers enabled prompt, informed decisions.

- Reduced Workload: The model’s ability to extract and summarize essential information significantly reduced manual effort.

- Enhanced Innovation: Streamlined access to research facilitated the identification of new opportunities and trends.

- Reduced Costs: Savings on resources and time previously spent on manual research analysis.

Insight: Implementing LLMs can transform labor-intensive tasks into efficient, automated processes, fostering innovation and cost savings. Source

8. Cybersecurity: Mapping Regulations to Policies

A large financial services company utilized a tailored, cybersecurity domain fine-tuned platform to map regulations to policies and controls, reducing the process from months to a few days.

Lesson Learned: LLMs’ ability to understand semantics can streamline complex regulatory compliance tasks. Source

9. Customer Service: AI-Assisted Support

A global e-commerce platform implemented a customer service chatbot powered by an LLM, trained to understand and respond to a wide range of customer queries. The chatbot reduced average response times by 70% and handled 80% of customer queries without human intervention.

Best Practice: Deploying LLM-powered chatbots can significantly enhance customer service efficiency and satisfaction. Source

10. Healthcare: Adverse Event Detection

A pharmaceutical company developed an ML-driven solution using Amazon SageMaker to detect adverse events from various data sources, such as social media feeds and emails. The solution fine-tuned models on medical data, improving the detection and monitoring of adverse events.

Insight: Fine-tuning LLMs on domain-specific data enhances their applicability in critical healthcare monitoring tasks. Source

These case studies demonstrate the versatility and transformative potential of LLMs across diverse sectors. Key takeaways include the importance of customizing models to specific industry needs, integrating rigorous testing and feedback mechanisms, and leveraging LLMs to automate complex tasks, thereby enhancing efficiency, accuracy, and customer satisfaction.

| Case Study | Industry | Key Metrics/Results | Key Insight |

|---|---|---|---|

| Automated Report Summarization | Financial Services | 70% Reduction in Analyst Reading Time | Tailoring LLMs to specific industry data enhances effectiveness. |

| Personalized Product Recommendations | E-commerce | 15% Increase in Click-Through Rates, 10% Boost in Sales | LLMs can significantly enhance personalization strategies. |

| Clinical Diagnosis Assistance | Healthcare | 20% Reduction in Diagnostic Errors, Shortened Wait Times | Customizing LLMs enhances accuracy in high-stakes environments. |

| Knowledge Sharing Enhancement | Manufacturing | Quicker Information Retrieval, Efficient Issue Resolution | Integrating LLMs into knowledge management streamlines access. |

| AI-Powered Booking System | Hospitality | Consistent Chatbot Responses, Effective System Functionality | Rigorous testing mechanisms are crucial for customer-facing AI. |

| AI-Powered Analytics | Energy and Utilities | Better Decision-Making, Operational Cost Savings | LLMs for data analysis drive efficiency and cost-effectiveness. |

| Research Analysis Automation | Food Processing | Improved Efficiency, Reduced Workload, Enhanced Innovation | LLMs can transform labor-intensive tasks into efficient processes. |

| Mapping Regulations to Policies | Cybersecurity | Process Reduction from Months to Days | LLMs streamline complex regulatory compliance tasks. |

| AI-Assisted Support | Customer Service | 70% Reduction in Response Times, 80% Query Handling | LLMs enhance customer service efficiency and satisfaction. |

| Adverse Event Detection | Healthcare | Improved Detection of Adverse Events | Fine-tuning LLMs on domain-specific data enhances applicability. |