In recent years, advancements in artificial intelligence have unfolded at a breathtaking pace, transforming industries and how we interact with technology. However, with great power comes great responsibility, and the need for robust evaluation systems has never been more critical. Enter the realm of AI Agent Evaluation Frameworks, which serve as essential tools in assessing the performance, safety, and reliability of AI agents.

As we delve into this tutorial, we will explore the creation of an advanced AI evaluation framework with a focus on key metrics. As noted,

“In this tutorial, we walk through the creation of an advanced AI evaluation framework designed to assess the performance, safety, and reliability of AI agents.”

This framework is not just a technical necessity but a vital component in ensuring that AI systems function effectively and ethically in a wide array of applications, providing peace of mind to developers and users alike.

Key Metrics for Evaluation

In the development of advanced AI agent evaluation frameworks, various metrics are crucial for ensuring precision, reliability, and ethical integrity. Here are the key metrics included in the evaluation framework, highlighting their importance and practical implications:

-

Semantic Similarity

This metric assesses how closely the AI agent’s output aligns with a given reference, whether it be a text, response, or data format.

By measuring semantic similarity, developers can ensure that the AI’s language comprehension mirrors human-like understanding, which is vital for applications in natural language processing, adhering to AI ethics principles.

-

Hallucination Detection

Hallucinations in AI outputs refer to instances where the model generates incorrect or false information confidently.

Effective hallucination detection is critical for maintaining user trust and safety, especially in applications such as medical diagnosis or legal advice, where misinformation can lead to severe consequences. This links directly to the need for robust machine learning evaluation practices.

-

Factual Accuracy

This metric evaluates the veracity of the information provided by the AI agent.

High factual accuracy is essential, particularly in fields like journalism and education, where the credibility of information is paramount.

A focus on this metric helps curtail the spread of misinformation and enhances the overall integrity of AI applications, reflecting the significance of using reliable AI performance metrics.

-

Toxicity

Measuring toxicity involves assessing the likelihood of an AI-generated response containing harmful or offensive language.

By analyzing toxicity, developers can create safer environments for users, especially in interactive applications such as chatbots and social media platforms, where user interaction can be sensitive.

-

Bias Analysis

This metric investigates the presence of bias in AI outputs, ensuring that the AI does not perpetuate or amplify societal biases.

Effective bias analysis is crucial for equitable AI applications in diverse sectors like hiring practices and law enforcement, promoting fairness and inclusivity.

Practical Implications

The integration of these metrics in AI evaluation frameworks not only bolsters the technical performance of AI systems but also aligns their functionalities with ethical standards and societal expectations, reinforcing the role of AI ethics. As AI technologies proliferate across various sectors, rigorous evaluation metrics will play a pivotal role in ensuring AI agents act responsibly and reliably in real-world scenarios, guided by comprehensive machine learning evaluation strategies.

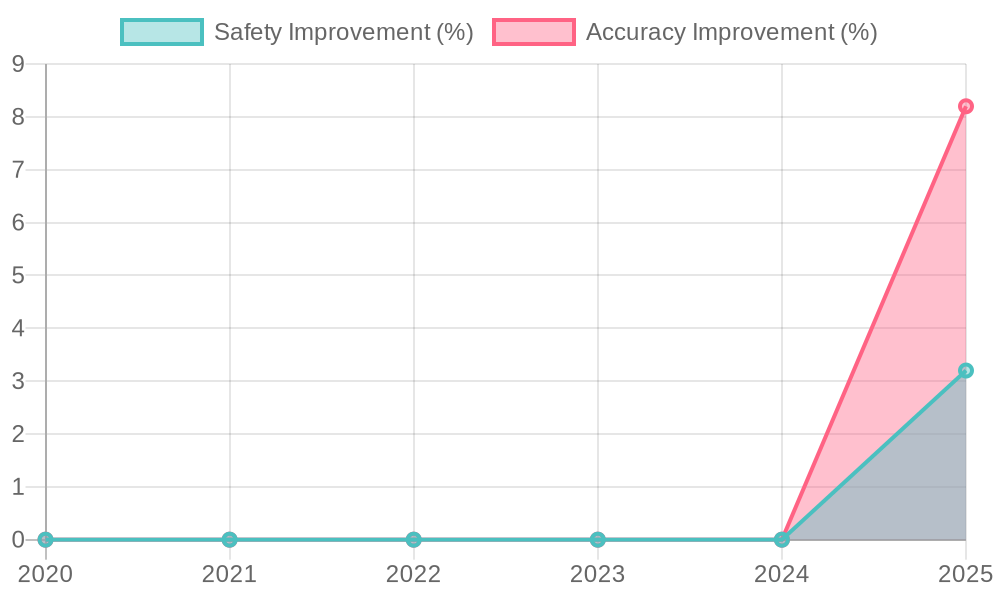

| Metric | Criteria | Advantages | Limitations |

|---|---|---|---|

| Safety | Measures the potential harm AI could cause | Helps in designing safer AI systems | May not cover all safety aspects or real-world scenarios |

| Accuracy | Evaluates correctness of AI outputs | Ensures reliable performance in critical applications | Can be hard to define a correct answer in ambiguous cases |

| Bias Analysis | Investigates systemic bias in outputs | Promotes fairness and equity in AI applications | Requires diverse datasets for effective evaluation |

| Performance Visualization | Assesses AI performance over time | Provides intuitive insights for stakeholders | May not capture all nuances of AI behavior |

The Role of Python in Framework Development

Python plays a crucial role in the development of the AI evaluation framework, primarily due to its object-oriented programming (OOP) paradigm. This programming style provides a robust foundation that is pivotal for creating modular, scalable, and extensible software applications.

Object-Oriented Programming Benefits

- Encapsulation: Python’s OOP approach allows the encapsulation of code into independent objects that can manage their own data. This is particularly beneficial in AI frameworks that require a variety of metrics and evaluation techniques. By encapsulating functionality, developers can easily modify or extend components of the framework without impacting other parts of the system.

- Inheritance: The ability to create new classes based on existing ones promotes code reusability. In the context of an AI evaluation framework, developers can implement shared functionality across multiple metrics, saving time and resources while ensuring consistency in evaluation criteria.

- Polymorphism: With polymorphism, the framework can manage different evaluation metrics uniformly. It allows developers to define methods in different classes with the same name, providing flexibility in method implementation. This is particularly important when integrating diverse evaluation strategies such as toxicity measures or bias analysis, enabling them to interoperate seamlessly within the framework.

Scalability and Extensibility

Python’s OOP principles significantly enhance the scalability of the evaluation framework. New metrics can be added easily without overhauling the existing architecture, thereby accommodating future growth. For instance, as AI evaluation needs evolve, additional testing criteria can be integrated just by extending the existing classes.

Furthermore, the extensibility of Python enables developers to adapt and enhance their evaluation framework over time, catering to specific user requirements or emerging AI technologies. This ability to modify components and functionalities as needed ensures that the framework remains relevant and capable of meeting complex AI challenges.

Connection to Multithreading

Another significant advantage of Python in framework development is its support for multithreading. By utilizing multithreading, the framework can perform concurrent evaluations of different AI agents or metrics, vastly improving efficiency. For example, while one thread processes toxicity metrics, another can evaluate factual accuracy simultaneously, resulting in faster evaluations and more effective resource utilization. This capability is particularly important in real-time applications where responsiveness is crucial.

In summary, Python’s object-oriented programming not only provides a strong foundational structure for the AI evaluation framework but also enhances its scalability and extensibility. Coupled with effective multithreading, it allows the framework to operate efficiently and adaptively, meeting the demands of advanced AI evaluation.

Advanced Techniques Used in AI Agent Evaluation

The evaluation of AI agents requires precision and adaptability to ensure accurate results and performance assessments. Two advanced techniques that significantly enhance this evaluation process are adaptive sampling and confidence intervals. Below is an overview of how these techniques are applied in the framework:

Adaptive Sampling

Adaptive sampling is a method that optimally selects data points to be evaluated based on previous sampling results. By focusing on areas of high uncertainty or variance, adaptive sampling reduces the number of evaluations needed while maintaining accuracy. This technique is particularly beneficial in evaluating AI models because it allows evaluators to concentrate resources on the most informative instances, leading to a more efficient evaluation process.

For instance, when conducting performance evaluations on AI agents, rather than sampling uniformly across the entire dataset, adaptive sampling adjusts the selection of points based on where the model performs poorly or is uncertain. This not only accelerates the evaluation but also enhances the model’s learning by exposing it to more challenging cases.

Confidence Intervals

Confidence intervals provide a statistical range that estimates where a parameter lies with a certain level of confidence. In AI evaluations, constructing robust confidence intervals around performance metrics is crucial for understanding the reliability and variability of those metrics. This technique quantifies the uncertainty related to the estimates of a model’s performance, guiding decision-makers in evaluating AI systems.

Using confidence intervals, evaluators can assess the robustness of AI predictions, especially when results show wide variance. For example, by determining the confidence interval of an AI’s accuracy across multiple trials, stakeholders can better understand whether an AI agent’s performance is consistently reliable or whether there are significant fluctuations.

Significance and Impact

Both adaptive sampling and confidence intervals bolster the evaluation framework by providing a meaningful way to assess AI agent performance efficiently. Adaptive sampling not only reduces the workload on evaluators but also drives a more informative analysis of stochastic behaviors in AI agents. Meanwhile, confidence intervals solidify the credibility of results by contextualizing performance metrics within statistically sound boundaries.

Incorporating these techniques allows organizations to make data-driven decisions with greater confidence, ensuring the safety and reliability of AI applications in real-world settings. As AI systems evolve, the integration of these advanced techniques assures that evaluations remain relevant and reflective of actual performance.

Case Studies of AI Evaluation Frameworks

AI evaluation frameworks have been successfully adopted in various industries, showcasing their effectiveness in real-world applications. Here are notable case studies that highlight user adoption data and outcomes:

-

IBM: Personalized Learning in Corporate Training

IBM integrated its AI platform, Watson, to offer personalized learning experiences. By analyzing job roles and performance data, Watson tailored learning paths for each employee. This initiative resulted in a reduction of training time by 40% and increased course completion rates. According to IBM, “The personalized learning approach has not just transformed our training methods but has significantly enhanced employee engagement and satisfaction.” (Source) -

Walmart: AI-Powered Virtual Reality Training

Walmart employed AI-powered VR training modules to enhance employee readiness for various scenarios. The AI monitored performance and provided tailored feedback, achieving a 15% performance improvement while reducing training time by 95%. Walmart noted that the AI system empowered employees to excel in customer interactions more confidently. -

Australian Government: Generative AI Pilot

The Australian Government executed a six-month AI pilot using Microsoft 365 Copilot across 60 agencies with 5,000 staff participating. Users reported saving about one hour per task, with 40% reallocating their time to higher-value activities. The pilot highlighted challenges like incorrect AI outputs, informing future adoption strategies. According to a government report, “The AI pilot allowed us to streamline workflows significantly while acknowledging the importance of training our personnel.” (Source) -

General Electric (GE): AI in Aviation

GE utilized AI-powered predictive analytics within its aviation sector, analyzing data from over 100 aircraft systems. This strategy led to a 10% reduction in maintenance costs and a 15% increase in operational efficiency. The implementation allowed GE to predict failures before they occurred and as stated by a GE representative, “AI has transformed our operations, turning insights into actionable strategies.” (Source) -

Freeport-McMoRan: AI in Mining

Freeport-McMoRan collaborated with data scientists to implement an AI engine optimizing mineral processing. The project improved output by 10% in a pilot site, with expectations of a 5-10% increase across all mines when scaled. A company spokesperson remarked, “This AI initiative is akin to creating the capacity of a new plant, enhancing both productivity and efficiency.” (Source) -

JPMorgan Chase: AI in Fraud Detection

JPMorgan Chase deployed AI algorithms to enhance its fraud detection capabilities, achieving a notable decrease in false positives. The AI processes transaction data in real-time to identify suspicious anomalies. The approach has not only reduced fraud but increased customer trust, furthering JPMorgan’s commitment to advanced technology in finance. (Source)

These diverse case studies highlight the effectiveness of AI evaluation frameworks and their ability to drive efficiency and innovation in various sectors. They show how tailored implementations can lead to significant user adoption data and measurable outcomes.

In conclusion, the journey to establishing a robust AI Agent Evaluation Framework is not only vital for the integrity and safety of AI applications but also pivotal for shaping the future of technology. Throughout this article, we have explored the essential metrics, advanced techniques, and real-world case studies that underline the significance of a comprehensive evaluation strategy. Embracing such a framework will empower developers and businesses alike, enabling them to refine their AI systems and foster trust among users.

As AI continues to permeate every aspect of our lives, adopting a rigorous evaluation framework will be indispensable in ensuring that these systems function effectively and ethically. To inspire action, let us remember the words of Asif Razzaq: “We’ve equipped ourselves with a modular, extensible, and interpretable evaluation system that can be customized for real-world AI applications across industries.” It is time to take the next step and commit to advancing AI responsibly, safeguarding our future with the profound potential of this technology.

The Role of Python in Framework Development

Python plays a vital role in developing the AI evaluation framework, mainly because of its object-oriented programming (OOP) paradigm. This programming style offers a strong foundation essential for creating modular, scalable, and extensible software applications.

Object-Oriented Programming Benefits

- Encapsulation: Python’s OOP method allows code to be organized into independent objects that handle their own data. This is particularly useful in AI frameworks where various metrics and evaluation techniques are needed. Encapsulating functionality enables developers to modify or extend components of the framework without affecting other parts of the system.

- Inheritance: The ability to create new classes based on existing ones promotes code reuse. In the context of an AI evaluation framework, developers can implement shared functionality across multiple metrics, saving time while ensuring consistency in evaluation criteria.

- Polymorphism: Polymorphism allows the framework to manage different evaluation metrics uniformly. Developers can define methods in various classes with the same name, providing flexibility in implementation. This is crucial when integrating diverse evaluation strategies such as toxicity measures or bias analysis, enabling seamless interoperation within the framework.

Scalability and Extensibility

Python’s OOP principles enhance the scalability of the evaluation framework. New metrics can be added easily without an overhaul of existing architecture, accommodating future growth. For example, as AI evaluation needs change, additional testing criteria can be integrated simply by extending existing classes.

Furthermore, Python’s extensibility allows developers to adapt their evaluation framework over time, catering to specific user requirements or emerging AI technologies. This capacity for modification ensures that the framework remains relevant and able to address complex AI challenges.

Connection to Multithreading

Another significant advantage of Python in framework development is its support for multithreading. By utilizing this feature, the framework can perform concurrent evaluations of different AI agents or metrics, vastly improving efficiency. For instance, while one thread processes toxicity metrics, another can evaluate factual accuracy simultaneously, resulting in faster evaluations and more effective resource use. This capability is vital in real-time applications where responsiveness matters.

In summary, Python’s object-oriented programming not only provides a strong structural foundation for the AI evaluation framework but also enhances its scalability and extensibility. Together with effective multithreading, it allows the framework to operate efficiently and adaptively, meeting the demands of advanced AI evaluation.

To create a seamless transition into the practical applications of the AI evaluation framework, we must acknowledge the pivotal role of advanced techniques like adaptive sampling and confidence intervals. These methods not only enhance the precision of our evaluations but also lay the groundwork for real-world applications.

As we prepare to explore the case studies demonstrating how organizations have implemented these frameworks effectively, it is essential to understand the direct correlation between the advanced techniques discussed and their real-world implications. The subsequent section aims to illustrate the practical efficacy of these frameworks through diverse industry examples, showcasing how tailored implementations have achieved measurable outcomes and improved operational efficiencies.